Before we took AI seriously at Indigo Tree, we were spending up to two days a month reviewing budgets, retainers and on client reporting. Over the past year, we’ve focused on practical, low-cost, high-impact changes. AI for agencies is about fixing the underlying processes first, then using AI to automate the boring or routine parts safely.

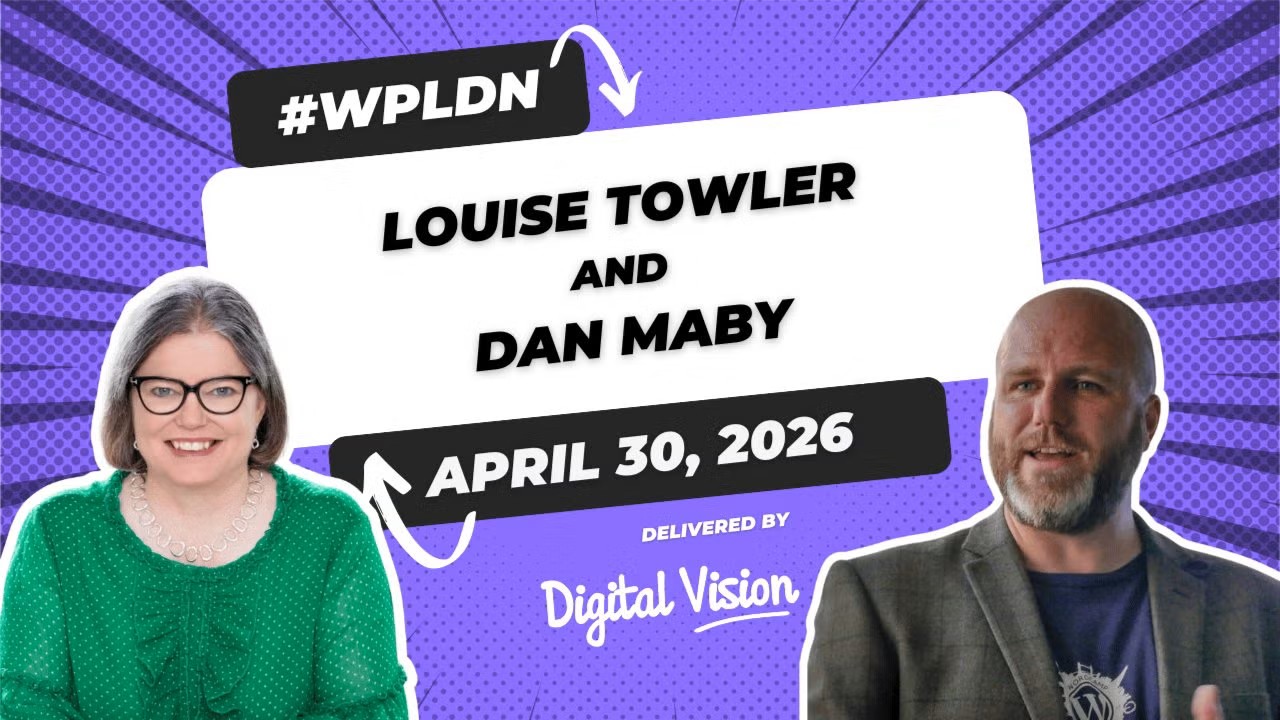

At the WordPress London Meetup in April 2026, I shared what a year of honest AI adoption actually looks like inside a digital agency: the wins, the failures, and the guardrails that stopped us from breaking things in production.

Here’s the write-up.

- Monthly client reporting: reduced to ~10 minutes per client (with human review)

- Time saved: ~1.5 days per month on reporting and admin

- Call notes: ~45 minutes saved per client call on write-ups

- Budget visibility: real-time tracking with alerts at 80% used

The empty pantry problem

Client reporting and budget tracking were taking up to two days of unbillable project management time every month. For us, AI for agencies started here: the team was checking time logs, reviewing tasks, and manually generating the reports.

On top of that, it took a while to check the care plan and retainer time for a specific client. We were a great agency running on some patchy data.

The rule we kept coming back to: you cannot automate a process that is not working. Let’s fix the basics first.

Mise en place: sorting Teamwork Projects before anything else

Getting the automations right

Before we brought AI anywhere near reporting, we rebuilt how Teamwork Projects and Teamwork Desk talked to each other.

When a new support ticket arrives, it now automatically receives an SLA, so it can be linked to a task in the client’s care plan project, and the task is added to the backlog on the kanban board. Time can then be logged directly from the Desk ticket.

As the task moves through the board, the Desk ticket updates automatically, reducing the time spent chasing for updates and missed notifications.

Budget tracking that actually works

Retainer time is now set up as a monthly project budget in Teamwork, with unused time carried forward in line with our client terms. As time gets logged, the finance report updates in real time. We set alerts at 80% budget used. Project managers know where they stand on every account without opening a spreadsheet.

Weekly utilisation reports are automatically generated, so we can see exactly how much time each team member is logging, with an overview for the senior leadership team on Monday morning, in time for our all-hands.

The development kitchen: Cursor and Sourcery

We use Cursor for Teams for development. It’s genuinely useful for boilerplate and exploration, but it still needs a developer who knows what they’re doing. It does not replace skill; it amplifies it.

Sourcery has been the quieter win. It runs on pull requests, catches issues, and has been very low-friction to adopt.

Our Engineering Director, Paul, has spent time setting up team-level rules: CSS standards, PHP standards, JavaScript rules, React standards, a WordPress-specific rule set, and a /deslop command to remove AI-generated padding from branches before they go anywhere near main.

Running the back office: Claude, Copilot and MCPs

The monthly client reports

This is the flagship. Claude Desktop, connected to Teamwork and SharePoint via MCPs, now generates our monthly client reports. It creates a new folder, pulls the time data from Teamwork as a CSV, copies the previous month’s Word document, and updates it with the new figures and time calculations.

An account manager always reviews it before it goes to the client. That review step is not optional; it is the whole point.

- Month one: it worked beautifully.

- Month two: Claude updated, and the formatting broke, so we refined the prompt.

- Month three: the numbers didn’t tally because a few people had logged time against the previous month, so this required a process fix, not an AI fix.

- Month four: reports generated efficiently and happy clients.

Estimated saving: 1.5 days per month.

The other tools are doing real work

Fathom.Video records and summarises our client call: Teams, Zoom, Google Meet. With a full transcript, action points by person, and next steps. Saving roughly 45 minutes per call in note-taking and write-up time.

Grammarly is used across the entire client-facing team for emails, proposals, and content. It keeps our tone consistent and helps catch spelling and grammar mistakes.

Copilot and Claude Pro are used for research, content drafting, deep document analysis, and, increasingly, for help with tasks through custom prompts.

The AI Policy: guardrails, not handcuffs

We wrote our AI Policy before we had a problem, which is really important.

Every professional kitchen has health and safety rules. You label the containers. You don’t taste with the serving spoon. Not because the head chef doesn’t trust you, but because the rules are what allow everyone to cook with confidence.

Our policy protects three things: our reputation, client trust, and the team’s professional development.

The data protection rule that actually sticks is a simple question:

If this prompt leaked tomorrow, would I get fired?

Before a problem, it’s a set of guardrails; after one, it’s damage control.

What we’re still cooking

The specials board isn’t empty, but not everything on it is ready yet.

We’re testing automated briefings from Fathom call transcripts, standardised quoting workflows to reduce scope creep, a five-step new project setup automation, and AI-generated theme JSON from design files.

For clients, we’ve built processes for AI-generated user personas, top-task analysis from customer data, tone-of-voice prompts, multilingual translation, and bulk alt text generation for existing media libraries.

We’re also paying close attention to how content needs to change for AI search visibility: structured data, LLMS.txt, schema markup, and writing answers to real questions rather than just landing pages.

Five things to take back to your kitchen

- Sort your pantry before you redesign the kitchen. Fix any broken processes first.

- Mise en place is the job. The boring automations are what make the interesting work reliable.

- Write the recipe before you need it. An AI Policy written proactively is a set of guardrails.

- Expect one dish to fail before service. Put a human in the loop from the start, and assume the models will change and break things.

- The menu is never finished. Are you creating new specials, or waiting for someone else’s?

A year in, client reporting takes ten minutes per client. Project managers have real-time budget visibility, and our time logging is up to date.

The pantry is organised, and our menu is longer than it was twelve months ago, but we’re still testing the specials!

FAQs about

Yes, and small teams can often move faster because there are fewer people to get on board. The key is to start with the most painful manual process and fix that first before reaching for anything more complex.

We haven’t built anything from scratch. Everything we’re using sits on top of tools the team was already working with, connected via APIs and MCPs. The skill lies in prompt and workflow design, not engineering.

The AI Policy does a lot of the work here, especially the single question about whether a prompt leak would get someone fired. But we also standardised on Copilot and Claude Pro, so the team has secure, licensed tools they trust, rather than reaching for free alternatives.

You build the human review step in from day one, so a human catches it before it reaches the client. Our reporting flow has an account manager sign-off before anything goes out. That single step is what allows the automation to exist.

By keeping a before figure. We knew client reporting was taking one to two hours per client per month. Now it takes under ten minutes. That’s the comparison. You can’t measure improvement without knowing your baseline.